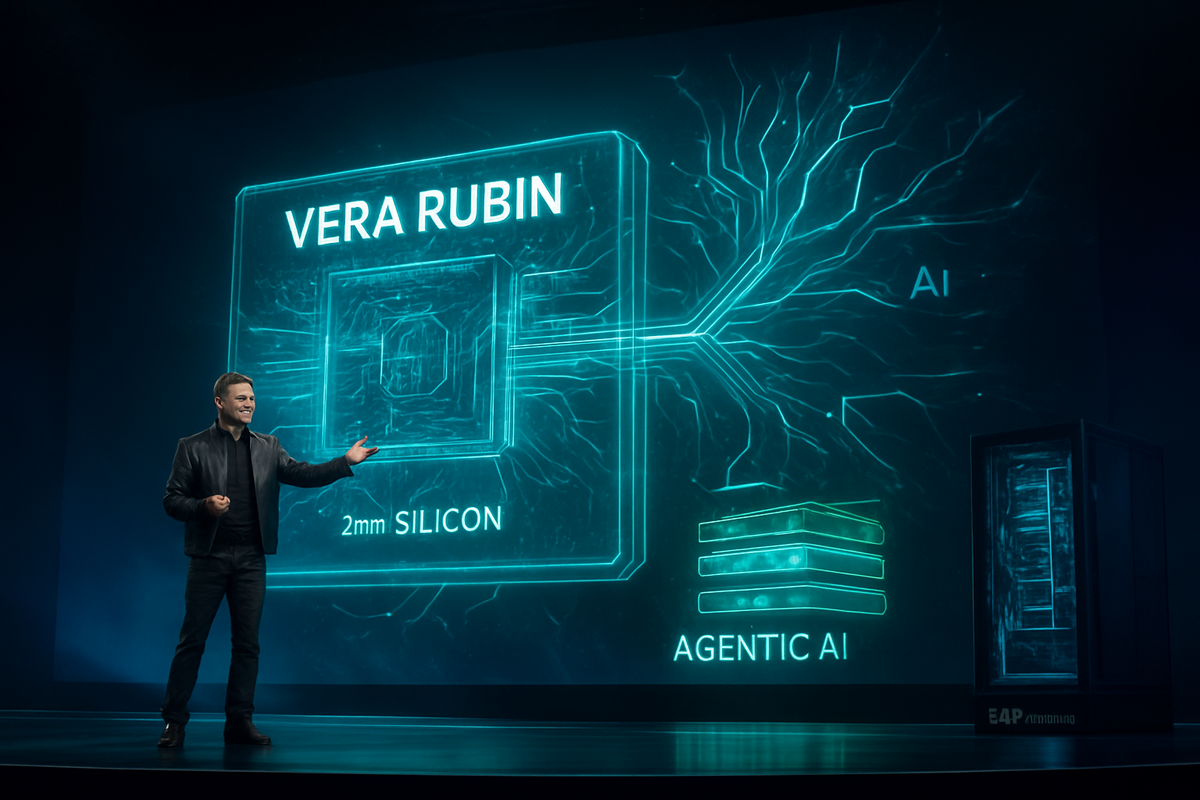

SAN JOSE, CA — The annual Nvidia GTC conference kicked off today, March 16, 2026, with a high-octane keynote from CEO Jensen Huang that signaled a fundamental shift in the landscape of artificial intelligence. Standing before a packed audience at the SAP Center, Huang unveiled the "Vera Rubin" AI platform, the highly anticipated successor to the Blackwell architecture. Named after the pioneering astronomer who provided evidence for dark matter, the Rubin architecture is designed not just to process data, but to power the era of "Agentic AI"—autonomous systems capable of complex reasoning, planning, and physical interaction.

The immediate implications of the Vera Rubin launch are profound. As global data centers struggle with the dual pressures of skyrocketing AI demand and fixed power grids, Nvidia (NASDAQ: NVDA) is promising a massive leap in energy efficiency and compute density. By shifting to a one-year release cycle, Nvidia has effectively accelerated the pace of silicon innovation, leaving competitors scrambling to match a roadmap that shows no signs of slowing down. For the market, the announcement reinforces Nvidia’s iron grip on the AI training and inference sectors, even as the company pivots toward becoming a full-stack robotics and "AI factory" provider.

The unveiling of Vera Rubin marks the culmination of a strategic pivot Nvidia initiated two years ago. While the previous Blackwell line (B200) focused on scaling Large Language Models (LLMs) to trillion-parameter heights, the Rubin R100 GPU is built for the "Reasoning" era. Utilizing Taiwan Semiconductor Manufacturing Company’s (NYSE: TSM) cutting-edge 3nm (N3P) process, the Rubin GPU boasts a staggering 336 billion transistors—nearly double the density of its predecessor.

A critical highlight of the keynote was the introduction of HBM4 (High Bandwidth Memory 4). Nvidia confirmed it has secured the lion's share of HBM4 supply from partners like SK Hynix and Samsung (OTC: SSNLF), providing the Vera Rubin GPUs with a record-breaking 22 TB/s of memory bandwidth. This allows for "local" residency of massive models, drastically reducing the latency that currently plagues complex AI "agents" that must think before they act. The timeline for the rollout is aggressive: initial Vera Rubin samples are expected to ship to tier-one cloud providers by late 2026, with full-scale production hitting the market in early 2027.

The industry reaction was instantaneous. Shares of Nvidia saw a pre-market surge as analysts digested the performance metrics: a 5x increase in inference throughput using the new NVFP4 (4-bit floating point) format. Key stakeholders, including executives from Microsoft (NASDAQ: MSFT) and Meta (NASDAQ: META), were reportedly in attendance, eager to get their hands on the Vera Rubin NVL144—a liquid-cooled rack-scale supercomputer that Jensen Huang described as "the new unit of compute for the modern enterprise."

The primary winner of this announcement is undoubtedly Nvidia itself, which continues to trade at the top of the global market capitalization rankings, currently hovering between $4.5 trillion and $5 trillion. By maintaining a 90% share of the high-end AI accelerator market, Nvidia has turned its hardware into a "de facto" standard that software developers cannot ignore. Taiwan Semiconductor Manufacturing Company also stands to gain significantly; as the sole fabricator of the 3nm Rubin chips and the provider of advanced CoWoS (Chip-on-Wafer-on-Substrate) packaging, TSM remains the indispensable backbone of the AI era.

Suppliers of high-bandwidth memory are also in the winner's circle. SK Hynix and Micron Technology (NASDAQ: MU) are expected to see sustained demand for HBM4, a component that represents an ever-increasing percentage of the total bill of materials for AI servers. Furthermore, server integrators like Super Micro Computer (NASDAQ: SMCI) and Dell Technologies (NYSE: DELL) are poised to benefit as enterprises move to replace aging Blackwell and Hopper clusters with the more power-efficient Rubin architecture.

Conversely, traditional competitors face a widening "innovation gap." Advanced Micro Devices (NASDAQ: AMD), despite making strides with its MI450X series, is now under immense pressure to accelerate its own roadmap to match Nvidia’s yearly cadence. Similarly, "hyperscaler" internal chip projects at Alphabet (NASDAQ: GOOGL) and Amazon (NASDAQ: AMZN) face a moving target. While Google’s TPU v6 remains a formidable internal tool, the sheer versatility and software ecosystem of Nvidia’s CUDA platform—now optimized for Rubin—makes it difficult for proprietary silicon to win over the broader developer market.

The significance of the Vera Rubin architecture extends beyond mere speed; it represents a milestone in the "Industrial Revolution of AI." For years, the industry trend was focused on "System 1" AI—fast, intuitive, but often prone to hallucinations. Huang’s GTC keynote made it clear that Nvidia is now targeting "System 2" AI—deliberative, multi-step reasoning. This shift is essential for the transition to "Physical AI," where Rubin-powered chips will serve as the "brains" for humanoid robots and autonomous industrial plants.

This event also highlights a critical regulatory and policy trend: "Sovereign AI." Many nations are now racing to build their own domestic AI factories to ensure data security and economic competitiveness. By offering the Vera Rubin architecture as a turn-key solution in the form of the NVL72 and NVL144 racks, Nvidia is positioning itself as the primary partner for national governments. This could lead to increased scrutiny from antitrust regulators concerned about a "monoculture" in AI hardware, though Nvidia’s defense remains its relentless pace of innovation that benefits the entire ecosystem.

Historically, this moment is being compared to the transition from the mainframe to the PC, or the PC to the cloud. However, the scale is vastly different. In 2026, the data center is no longer a collection of servers; it is a single, massive, distributed computer. The Rubin architecture is the first designed from the ground up to operate at this "rack-scale," effectively making the individual chip an obsolete metric for performance.

Looking ahead, the short-term focus will be on the supply chain’s ability to keep up with Rubin’s sophisticated requirements. The transition to 3nm and HBM4 is fraught with yield challenges, and any hiccup in TSMC’s production lines could lead to significant market volatility. However, if Nvidia executes as planned, the long-term possibility of "Agentic AI" becoming a trillion-dollar software market moves from speculation to reality.

Strategic pivots are already appearing on the horizon. With the Rubin architecture, Nvidia is moving deeper into the software layer with its "Nvidia NIM" agents. This move could potentially put them in competition with some of their largest customers—the cloud service providers who also want to own the AI agent layer. Investors should watch for how Microsoft and Amazon respond to Nvidia’s encroachment into the software application space.

The next major milestone is already being whispered about in the halls of GTC: "Feynman," the architecture expected to follow Rubin in 2027. By shortening the product lifecycle to 12 months, Nvidia has created a "treadmill effect" that requires customers to constantly upgrade to remain competitive, creating a predictable and massive recurring revenue stream for the company, but also a capital expenditure challenge for the rest of the industry.

Nvidia’s GTC 2026 keynote has set a new benchmark for the technology industry. The Vera Rubin architecture is not just a faster chip; it is a declaration of dominance in the next phase of computing. By integrating 3nm technology, HBM4 memory, and a focus on reasoning-based AI, Nvidia has addressed the critical bottlenecks of power and latency that have threatened to slow the AI boom.

For the market, the message is clear: Nvidia remains the undisputed leader of the AI super-cycle. Moving forward, the industry will be defined by the "AI Factory," where compute is treated as a utility and intelligence is the primary export. The success of the Rubin rollout will be the primary bellwether for the tech sector throughout 2026 and into 2027.

Investors should closely monitor TSMC’s 3nm yield reports and the progress of HBM4 validation over the coming months. As Nvidia continues to outpace its rivals, the primary risks move from "competitive threat" to "execution risk" and "geopolitical tension." For now, however, Jensen Huang’s vision of a world powered by Rubin-accelerated agents has the market looking toward a future where AI is not just a tool, but an autonomous participant in the global economy.

This content is intended for informational purposes only and is not financial advice.